University of Oklahoma Cyberinfrastructure Plan - 2022

Cyberinfrastructure Plan: University of Oklahoma and OneNet

1. University of Oklahoma Cyberinfrastructure Plan

1.1. OneOklahoma Friction Free Network (OFFN) at OU

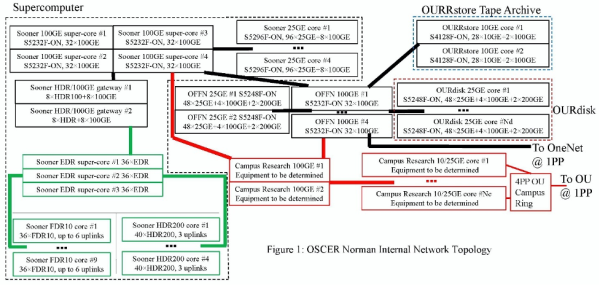

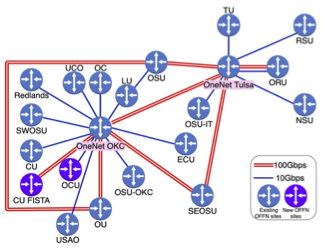

The OneOklahoma Friction Free Network (OFFN, see Figs. 1, 2) was established by OU’s first NSF CC* grant in 2013, and thanks to further grants will soon cover 20 campuses statewide. OFFN involves active collaboration between OU and OneNet (Oklahoma’s research and education network, and a division of Oklahoma’s State Regents for Higher Education). This includes that OU has served as the leadership PhD-granting institution on CC* grants from consortia of Oklahoma non-PhD-granting institutions, for which OSCER system administrator Kali McLennan plays an active role in deployment and training.

Connectivity between OU Norman and OU Health Sciences Center (OUHSC) in Oklahoma City is being deployed in phases. Phase 1 has two circuits: (1) A “best effort” Virtual Local Area Network (VLAN) transport across OU’s existing data center fabric, for management and low-speed replication of OFFN components at OUHSC, providing baseline connectivity for the OFFN control plane plus OURRstore and OURdisk at OUHSC. (2) A single dedicated 100 Gbps Ethernet (100GE) service is provided by OneNet, to integrate the OUHSC OFFN components into the statewide data transport system data-plane. Future phases include replacing the “best effort” VLAN via integration with the soon-to-be-deployed 100GE data center fabric, to supplement the data-plane OneNet circuit with more capacity and redundancy.

OUHSC OFFN components will reside in OUHSC’s premier data center, Nicholson Tower, to allow OFFN Data Transfer Nodes (DTNs) flexible access to campus data centers and networks, and to OFFN statewide. This flexibility allows shared or dedicated data paths between STEM research facilities and OFFN-connected CI (see below). The initial OFFN connectivity for OUHSC will be 100GE facing the OFFN network via a dedicated OneNet path, and multiple circuits to the campus network as research needs dictate. Future connectivity will include native 40GE and 100GE connections into OFFN and the OUHSC data center.

OU is undergoing a lifecycle refresh of its data center fabric, Dense Wavelength Division Multiplexing (DWDM) transport circuits, Internet routing hardware, and campus connections at OU Norman, OUHSC and OU Tulsa. Each upgrade better positions OU as both a consumer of, and a contributor to, OFFN. The data center refresh will update current 10GE connectivity to native 25/40/100GE, and will position OU for 400GE, to provide greater throughput between OFFN connected researchers and CI hosted in OU’s data centers. DWDM transport updates will bring native 100GE circuit services to OU's three campuses. The Internet routing hardware updates will bring native 100GE Internet connectivity to OU, as well as the ability to terminate dedicated research and education circuits and cloud provider circuits (e.g., Amazon, Google, Microsoft). This will position OU to host routed traffic for subsets of OFFN peers that need it. Finally, the planned campus network updates allow on-campus research facilities greater capacity and throughput, via engineered connections to OFFN services, to improve time-to-science statewide.

The OFFN site at OU Norman is in Four Partners Place, along with OSCER’s CI systems (below).

Switches (all SDN): 4 × 100GE 32-port Dell S5232F-ON; 2 × 25GE 48-port Dell S5248F-ON. Servers: 2 × Bastion/perfSonar, dual Intel 4208 8C, 64 GB, dual 10GE ports (dual 100GE later); 2 × virtualization pool, dual Intel 6132 14C (2 nodes) or 4216 16C (1 node), 96 GB, dual 25GE ports (dual 100GE later), 40 TB spinning (raw); 2 × DTN, dual Intel 4216 16C, 96 GB, dual 100GE ports; 1 × FIONA, dual Intel 6134 8C, 384 GB, dual 25GE ports (dual 100GE later), 2.4 TB NVMe (raw).

At OUHSC, we’re planning to deploy the following:

Switches (all SDN): 2 × 100GE 32-port Dell S5232F-ON; 2 × 10GE 28-port Dell S4128F-ON. Servers: 2 × Bastion/perfSonar, probably dual Intel 4208 8C, 64 GB, dual 10GE ports (dual 100GE later); 2 × DTN, probably dual Intel 4216 16C, 96 GB, dual 10GE ports (dual 100GE later).

1.2. OU’s new cluster supercomputer (Sooner) is being deployed now. Two changes from previous clusters: (a) worldwide, hardware speedup per dollar has slowed, and (b) OU’s new acquisition approach is rolling year-by-year partial upgrades instead of monolithic large leases every 3-4 years. Our FY2021 purchases were almost entirely of support nodes, networks and storage, with minimal compute, but our FY2022 plan is primarily compute, a mix of Intel Ice Lake and AMD EPYC Rome. (Milan has a worse price/performance ratio.) OU’s FY2021 and FY2022 budgets are both ~$830K for hardware and annual support (typically mid 5 figures). Future fiscal years are expected (not guaranteed) to be similar.

OSCER-owned Compute & GPUs: We’re keeping our old Haswell/Broadwell compute nodes in production, even as we’ve been adding Skylake, Cascade Lake, Ice Lake, Rome and, soon, Sapphire Rapids, Genoa and beyond. We have OU IT budget for a few GPUs per year, including a few low end GPUs for debugging.

Condominium Compute & GPU: Users can buy compute nodes and GPUs (with our help) at any time. About half our old cluster, Schooner, is condominium; we expect Sooner to match. Condominium owners get to decide who runs on their nodes. OU’s CIO sponsors space/power/cooling/network and labor.

Storage: Ceph for home and scratch ~1.2 PB usable: 6 × object store, R740xd2, dual 4216 16C, 192 GB, 24 × 16 TB, 2 × 1.6 TB NVMe for WAL/DB, HDR100 2-port, erasure coding @ 4 data + 2 redundancy, 20% fallow (per Red Hat); 4 × metadata/monitoring/etc, R640, dual 4216 16C, 192 GB, HDR100 2-port.

Networks: Infiniband: For old *Well, FDR10 36-port 40 Gbps 3:1 oversubscribed (27 downlinks to 9 uplinks, 13.3 Gbps fully saturated), 9 core switches; for new *Lake/EPYC, HDR 40-port 200 Gbps 4:1 oversubscribed (64 HDR100 downlinks to 8 HDR200 uplinks, 25 Gbps fully saturated), 4 core switches; Super-core EDR, 6 uplinks from each FDR10 core switch (2.16 Tbps total), 3 uplinks from each HDR core switch (2.4 Tbps total). Ethernet: 4 × 25GE 96-port Dell S5296F-ON; 4 × 100GE 32-port Dell S5232F-ON, uplinked to OFFN at 1 connection from each of these switches to each OFFN 100GE switch (1.6 Tbps total). IB-to-Ethernet Gateways: Some of our subsystems lack IB, and others use IP-over-IB for disk I/O. We currently have 6 temporary homemade gateways totaling 1 Tbps, which we’re replacing with 2 Mellanox Skyways totaling 1.6 Tbps (ordered, delivery expected Feb 2022).

1.3. OU Research Cloud (OURcloud): OURcloud uses Sooner’s OpenStack. We start at 3 Dell R640 servers with dual Intel Cascade Lake 6238R 28C, 768 GB RAM and dual HDR100 connections, with 3:1 oversubscription on CPU cores and 7/8undersubscription on RAM (host OS, I/O buffering). One node is fallow for failover. More RAM and nodes can be added as needed.

1.4. OU Research Disk (OURdisk): A large Ceph system mounted on our supercomputer and OURcloud, and to be available on external servers. At OU Norman, 19 × R740xd2 servers, configured like Sooner’s Ceph nodes, plus 5 metadata/monitoring nodes (2 deployed, 3 ordered), in 8+3 erasure coding (~4.2 PB usable, ~3 PB committed). At OU Health Sciences Center in Oklahoma City, 17 object nodes (~3.8 PB usable), otherwise the same. We’ll have a small replicated zone between Norman and OUHSC; we expect modest uptake because of double cost.

1.5. OURRstore Tape Archive: Funded by an NSF Major Research Instrumentation grant, the OU & Regional Research Store is available to research not only at OU, but across Oklahoma, in other Great Plains Network states (AR, KS, MO, NE, SD) and in all EPSCoR jurisdictions. We initially purchased ~11K tape cartridge slots, but we’ll roughly double that this fiscal year (NSF has approved), so 200+ PB.

1.6. Globus: OU is in the process of acquiring a campuswide Globus license, which will allow file sharing to 3rd parties from any OU storage system, including OURRstore and OURdisk.

1.7. Legally Regulated Enclaves: OU is planning to deploy enclaves for legally regulated computing and data (HIPAA, CUI, etc) in 2022. Planning has already been underway for over a year.

1.8. OSCER Team: OSCER currently has a director (CC* PI Neeman), 4 system administrators, and 0.7 FTE of Research Computing Facilitators (with a new Facilitator to be hired).

1.9. OU Technical Capabilities

1.9.1. IPv6: OU leverages a provider-independent allocation (2620:0000:2B20::/48) from ARIN, and maintains an IPv6-ready infrastructure across its three campuses, as well as its geographically diverse data center. Currently, OU leverages one IPv6 capable commercial Internet provider (Cox), as well as the IPv6 enabled regional optical network (RON) provider, OneNet (see below).

1.9.2. InCommon: OU is an active InCommon participant. OU leverages InCommon for identity and access federation (Shibboleth), web service security certificates (SSL), as well as eduroam.

1.9.3. Security: OU maintains a robust and distributed set of hardware and software security controls, a mature testing and training program for the OU community (“human firewall”), a dedicated Security Operations (SecOps) staff, a centralized Governance, Risk and Compliance (GRC) group, as well as a strong collaborative relationship with OneNet for upstream DDoS and other attack vector mitigations (see below). OU IT has 7 documented levels of data classification, with an extensive risk assessment program for technology purchases. OU maintains 90 days of audit logs, with real time searching capabilities, and OU archives that data for up to 1 year, for forensic investigation. OSCER has been meeting biweekly with GRC for over a year, and has recently begun weekly meetings with SecOps.

1.9.4. MANRS: While OU does implement best practice controls around the protection of its BGP Autonomous System Numbers (ASNs), the OU network is not classified as a carrier ISP, IXP, or CDN, and is not a formal member of MANRS. OU leverages OneNet as a primary research and Internet provider, and OneNet is currently an active member of MANRS (see below).

1.10. Relationship between OSCER and OU IT: OSCER is a division of OU IT. CC* Co-PI Neeman, OSCER’s Director, reports directly to OU’s Chief Information Officer (see CIO letter).

2. Statewide CI Plan (Much of this text was provided by OneNet.)

2.1. OneNet

Since 1996, OneNet has operated as the research and education network for all of Oklahoma’s public higher education institutions, and also serves nearly every private higher education institution, as well as public and private K-12 schools, libraries, health care centers and government agencies (state, local, federal, tribal). Over many years of operation, OneNet has continually refined its infrastructure to meet the ever-growing requirements of research institutions. Via public and private partnerships, optical fiber has either been built or acquired to span the distances between OU campuses in Oklahoma City and Norman and OSU campuses in Stillwater and Tulsa, at ever increasing bandwidths, by deploying both evolutionary and revolutionary technology.

OneNet’s strong focus on Oklahoma’s CI needs began with the creation of the first Internet2 network in 1998. By utilizing partnerships and experience with fiber construction, Oklahoma’s universities were among the first to connect to the new national network and have since been provided very high levels of bandwidth to both regional and national research backbones. In past years, the NSF EPSCoR RII C2 grant funded upgrades of OneNet’s DWDM network, to provide research bandwidth more cost effectively.

Simultaneous to the C2 project, Oklahoma was also awarded an NTIA BTOP CCI grant, providing nearly $74M to build fiber for middle-mile initiatives through areas of the state underserved by broadband providers. The Oklahoma Community Anchor Network (OCAN) directly serves 93 community anchors and is positioned to serve Oklahomans through 35 counties. OneNet is responsible for the ongoing operations and maintenance of OCAN and is establishing new partnerships with telecommunications providers to meet the needs of OneNet’s constituents in areas of the state not directly served by OCAN.

In the fall of 2012, OneNet recognized an opportunity with Internet2’s newly unveiled Innovation Platform, rapidly taking steps to garner the support of research institutions and to coordinate appropriate hardware and fiber, to become the first official member connection to Internet2’s new nationwide Software Defined Network (SDN). The 100GE connection was leveraged for research activity, as well as the diverse needs of community anchors throughout Oklahoma. At the end of the innovation platform project and through a partnership with the Great Plains Network (GPN), OneNet transitioned the 100GE to a full Internet2 connection with access to Internet2 and GPN member institutions.

OneNet continues to expand and upgrade Oklahoma’s CI. Currently, 100GE service is extended to R1 institutions and more in Tulsa, Stillwater, Oklahoma City and Norman. Beyond the traditional research ring, OneNet anticipates that the 10-to-100-fold increases in bandwidth being experienced by over a dozen higher education institutions directly served by OCAN in rural areas of Oklahoma will lead to new collaborations and opportunities for partnership.

In addition to bandwidth, OneNet also focuses on extending other CI technologies, with robust monitoring and measurement tools. OneNet is leading an effort to deploy and centrally manage perfSONAR devices statewide and to bridge SDN infrastructures with production networks.

2.2. OneNet Technical Capabilities

2.2.1. IPv6: OneNet has fully supported IPv6 across state infrastructure for several years. With a direct allotment (2610:1D8::/32) from ARIN, OneNet can serve all constituents and maintains IPv6 peering relationships with nearly all external network transit providers and peers. OneNet provides IPv6 addressing and routing to OFFN connected campus Science DMZs.

2.2.2. InCommon: OneNet is an InCommon member and will encourage and facilitate participation in InCommon to participating institutions where possible.

2.2.3. Security: OneNet provides DDoS (Distributed Denial of Service) protections for the network and campuses it serves, along with policy coordination and guidance. OneNet and OU work together to determine OFFN security and access policy, following the general guidelines and best practices for a regional Science DMZ, and work with the OFFN connected campuses to design, configure, deploy, support and maintain campus Science DMZ best practices.

2.2.4. MANRS: OneNet is an active participant in the MANRS initiative and has committed to and implemented the MANRS recommended industry best practices and solutions in order to address the most common Internet security threats. OneNet has implemented both the compulsory and recommended actions on both the OneNet and the OFFN network routing infrastructure.

2.3. OFFN Statewide

In 2013, via NSF CC* funding (NSF 1341028), OFFN was established as a separate high performance research and education network that leverages OneNet networking investments and facilities, connecting higher education CI facilities within Oklahoma at 10 to 100 Gbps. Since 2014, OFFN has provided connected institutions with a statewide Science DMZ, allowing researchers across Oklahoma to connect reliably to high performance network-based resources statewide, regionally, nationally and internationally. OFFN has been expanded four times, in 2017, 2019, 2020 and 2021, currently connecting/soon to connect 20 campuses of 18 institutions statewide (see Fig. 2).

2.3.1. OFFN Across Oklahoma: OFFN reaches/is funded to reach 20 campuses/18 institutions statewide. PhD-granting: OU, Oklahoma State U, U Tulsa. Non-PhD-granting: U Central Oklahoma, Langston U (Oklahoma’s only Historically Black College/University), Cameron U, East Central U, Rogers State U, Northeastern State U (Minority Serving Institution), Southeastern Oklahoma State U (MSI), Southwestern Oklahoma State U, Oral Roberts U, Oklahoma Christian U, U Science & Arts of Oklahoma, Oklahoma City U, OSU Institute of Technology, OSU-Oklahoma City. Community College: Redlands CC.

2.3.2. Clusters at Other Institutions: Cluster supercomputers are provided not only by OU but also by: Oklahoma State U; U Tulsa; U Central Oklahoma; Langston U (Oklahoma’s only Historically Black College/University and one of the few non-PhD-granting HBCU’s with an HPC center); Oral Roberts U.

2.3.3. Statewide OFFN Sustainability: Each participating campus is committed to sustaining OFFN beyond the life of the award that the campus participates in. OneNet is committed to sustaining this project beyond the lifetime of these awards, and the project is fully supported by the Chancellor of the Oklahoma State Regents for Higher Education (OneNet’s parent organization).

2.3.4. OFFN Grants: (1) “OneOklahoma Friction Free Network,” $500K. (2) “CC*IIE Engineer: A Model for Advanced Cyberinfrastructure Research and Education Facilitators,” $400K. (3) “Multiple Organization Regional OneOklahoma Friction Free Network,” $334K. (4) “Extended Vital Education Reach Multiple Organization Regional OneOklahoma Friction Free Network,” $500K. (5) “Small Institution Multiple Organization Regional OneOklahoma Friction Free Network,” $232K. (6) “Extended Small Institution - Multiple Organization Regional - OneOklahoma Friction Free Network,” $415K.