Data Transfer Node Reference Implementation

ESnet has assembled several reference implementations of hosts that can be deployed as a DTN or as a high-speed Globus file transfer test machine:

2023 ESnet6 50/100/200 Gb/s Capable DTN Design

The total cost of this server was around $25K in late 2022. These systems were deployed on ESnet in late 2022/2023 for ESnet6.

Hardware description

-

Base System: Supermicro 2124US-TNRP 2U dual AMD socket SP3 server

-

onboard VGA, dual10G RJ45, dual10G SFP+, onboard dedicated IPMI RJ45

-

1 PCI-E 4.0 x16 slot,

-

24 front access NVME hotswap bays

-

dual redundant hotswap 1200W PSU

-

- 2x AMD EPYC Milan 73F3

-

16 cores each

-

3.5Ghz 240W TDP processor

-

- 256 GB RAM - 16x 16G DDR4 3200 ECC RDIMM

- 800G System Disk: 2x Micron 7300 MAX 800G U.2/2.5" NVME

- 16TB Data Disk: 10x Micron 9300 MAX 3.2TB U.2/2.5" NVME (configured as RAID10)

- NVIDIA MCX613106A-VDAT ConnectX-6 EN Adapter Card 200GbE

- Mellanox MMA1L10-CR Optical Transceiver 100GbE QSFP28 LC-LC 1310nm LR4 up to 10km

- OOB license for IPMI management

- 2x 1300W -48V DC PSU OR 2x 1200W AC PSU

~2020 40/50/100 Gbps Capable DTN Design

The total cost of this server was around $21K in mid 2019. This system were deployed to ESnet in mid/late 2020. Please note that specifics on configuration will be available after full evaluation. Note that this server uses VROC, and requires the purchase of a premium license.

Configuration Details

Hardware description

- Base System: Gigabyte R281-NO0 dual socket P 2U server

- Onboard: VGA, 2 x GbE RJ45 Intel i350, IPMI dedicated LAN

- 24 x front access U.2 hotswap bays

- 2 x rear access 2.5” SATA hotswap bays

-

Dual redundant hotswap 1600W PSU

- 2 x Intel Cascade lake Xeon Gold 6246

- 12 cores each

- 3.3GHz 165W TDP processor

- 12 x 16G DDR4 2933 ECC RDIMM (192G total)

- 10 x Intel P4610 1.6TB U.2/2.5” PCIe NVMe 3.0 x4 Drives (connect directly to CPU for VROC)

- 2 x Enterprise 960G 2.5“ SATA SSD (OS, onboard Intel SATA Raid 1)

- VIntel® Virtual RAID On CPU (VROC) RAID 0, 1, 10, 5

- Mellanox ConnectX-5 EN MCX516A-CCAT 40/50/100GbE dual-port QSFP28 NIC

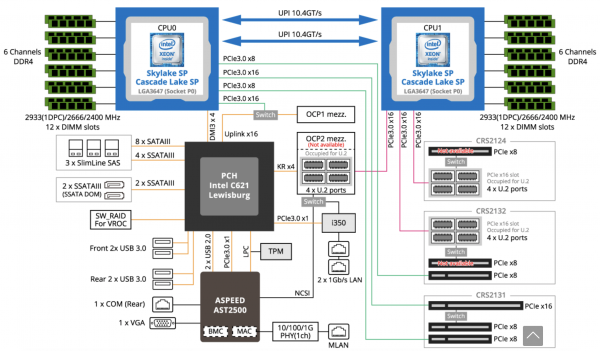

System Block Diagram

Preliminary System Performance

Initial system performance was evaluated utilizing two identical units, and a topology that consisted of:

- Back to back 40Gbps active cable

- 40Gbps local to 100Gbps WAN connection(s) with an RTT of 40ms (Berkeley CA to Chicago IL, round trip)

The testing was performed on Ubuntu 18.04 using XFS on mdraid with O_DIRECT and standard POSIX I/O. The file sizes varied between 256 GB and 1T with consistent results between file sizes, as well as consistent results when writing pseudo-random data vs all-zeroes. Testing revealed it was possible to maintain a sustained total of 80Gbps on both connections of the NIC. Future testing will focus on the use of 100Gbps cable cables and optics.

The test systems have employed several disk configurations:

The disk configuration is somewhat more complicated.

- Using RAID-0, the best results were 126 Gbps write and 140 Gbps read with 8 disks on a single controller (PCIe multiplexor).

- Current research is to increase the number of disks to 10 (with hopes of getting 155Gbps of raw performance) through re-arranging the disk placement on the PCIe multiplexor

- PCIe bandwidth is roughly 1 GBps per PCIe lane, thus balancing across multiple drives is required to ensure peak performance.

- The best preliminary storage results with parity are using mirroring in a RAID-10. The raw performance (to disk) is the same as above, but the effective write performance drops to about 60Gbps due to the resiliency requirements.

Testing has revealed that not all 12 cores (on a single chip) are activated regularly, unless the degree of parallelism increases. Busy DTN requirements will push up this requirement. Populating all memory channels in the RAM configuration also ensures peak performance, as the system is able to fully utilize and balance load as needed.