Science DMZ Architecture

The capabilities required to effectively deploy and support high-performance science applications include high bandwidth, advanced features, and capable gear that does not compromise on performance. Operational requirements drive the need for simplicity, accountability, accuracy, and the easy integration of test and measurement services. Security requirements come from the need to ensure correctness, prevent misuse, and the avoid embarrassment or other negative publicity that can compromise the reputation of the site or the science.

The Science DMZ architecture meets these needs by instantiating a simple, scalable network enclave that explicitly accommodates high-performance science applications while explicitly excluding general-purpose computing and the additional complexities that go with it.

Ideally, the Science DMZ is connected directly to the border router in order to minimize the number of devices that must be configured to support high-performance data transfer and other scientific applications. Achieving high performance is very difficult to do with system and network device configuration defaults, and the location of the Science DMZ at the site perimeter simplifies the system and network tuning processes. Also, if there is a performance problem, it is much easier to troubleshoot a handful of devices rather than a large general-purpose LAN infrastructure.

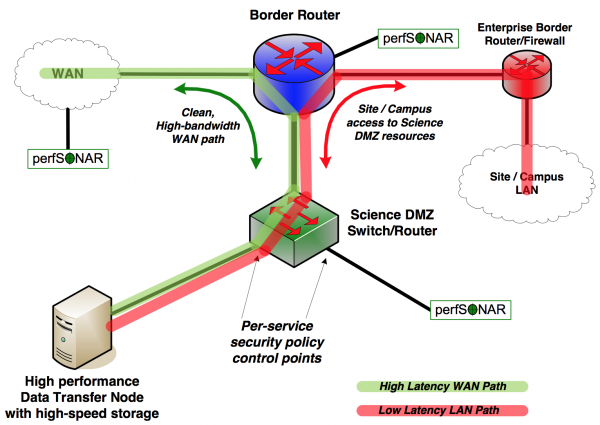

Simple Science DMZ Diagram

A simple Science DMZ has several essential components. These include dedicated access to high-performance wide area networks and advanced services infrastructures, high-performance network equipment, and dedicated science resources such as Data Transfer Nodes. A notional diagram of a simple Science DMZ showing these components, along with data paths, is shown below:

The essential components and a simple architecture for a Science DMZ are shown in the Figure above. The Data Transfer Node (DTN) is connected directly to a high-performance Science DMZ switch or router, which is connected directly to the border router. The DTN’s job is to efficiently and effectively move science data to and from remote sites and facilities, and everything in the Science DMZ is aimed at this goal. The security policy enforcement for the DTN is done using access control lists on the Science DMZ switch or router, not on a separate firewall.

Data transfers from offsite to the DTN only need to traverse two network devices - the border router and the Science DMZ switch/router (green path). This means that the high-latency or long-distance part of the data transfer traverses a small number of high-quality devices which are specifically engineered for performance.

If data needs to be transferred from the DTN to a device within the local site, the latency is small and the impact of performance problems caused by the enterprise firewall will be less (red path).

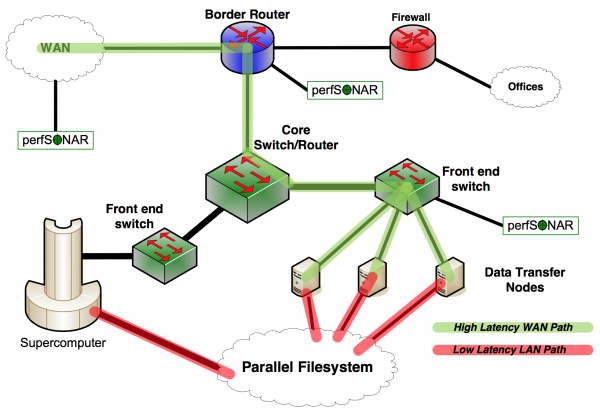

Supercomputer Center Network

The notional diagram below illustrates a simplified supercomputer center network. While this may not look much like the simple Science DMZ diagram above, the same principles are used in its design.

In the case of the supercomputer center network, the vast majority of the network infrastructure is what one might call the Science DMZ. It is built to handle high-rate data flows without packet loss, and designed to allow easy troubleshooting and fault isolation. Test and measurement is integrated into the infrastructure from the beginning, so that problems can be located and resolved quickly, regardless of whether the local infrastructure is at fault. Note also that access to the parallel filesystem by wide area data transfers is via data transfer nodes that are dedicated to wide area data transfer tasks. When data sets are transferred to the DTN and written to the parallel filesystem, the data are immediately available on the supercomputer resources without the need for double-copying the data. However, all the advantages of a DTN - dedicated hosts, proper tools, and correct configuration - are preserved.

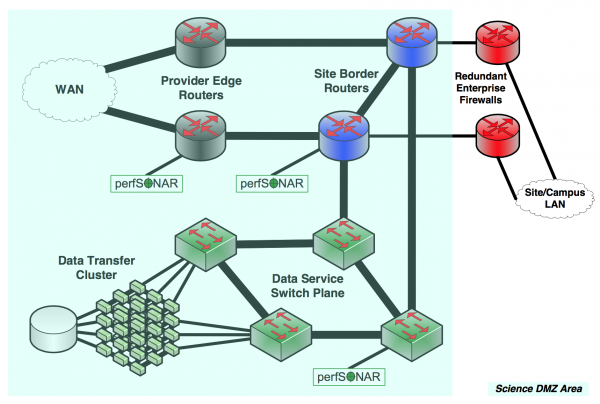

Big Data Site

For sites that handle very large data volumes (e.g. for big experiments such as the LHC), individual data transfer nodes are not enough. These sites deploy data transfer clusters, and these groups of machines serve data from multi-petabyte data stores. Still, however, the principles of the Science DMZ apply - dedicated systems are used for data transfer, and the path to the wide area is clean, simple, and easy to troubleshoot. Test and measurement are integrated in multiple locations to enable fault isolation. This network is similar to the supercomputer center example in that the wide area data path covers the entire network front-end.

This network has redundant connections to the research network backbone.The business portion of the network takes advantage of the high-capacity redundant infrastructure deployed for serving science data, and deploys redundant firewalls to ensure uptime. However, the science data flows do not traverse these devices - appropriate security controls for the data service are implemented in the routing and switching plane. This is done both to keep the firewalls from causing performance problems and because the extremely high data rates (tens of gigabits to hundreds of gigabits per second) are beyond the capacity of firewall hardware.

These examples illustrate the flexibility and utility of the Science DMZ principles. In general, high-performance science applications can succeed in a Science DMZ where they might have difficulty achieving the necessary performance in a general-purpose network.