Interrupt Binding

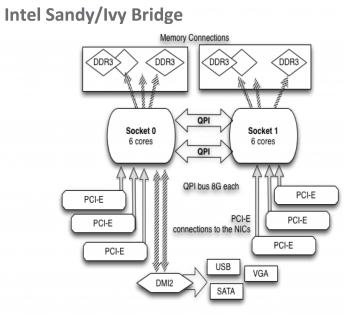

To fully maximize single stream performance (both TCP and UDP) on NUMA architecture systems (e.g.: Intel Sandy/Ivy Bridge motherboards), you need to pay attention to which CPU socket and core is being used.

As you can see in the figure on the right, the PCI slot for the NIC is directly attached only one of the two CPU sockets. There is a large performance penalty if either the interrupts or the application is on the wrong socket, because if that happens everything must cross the QPI bus. On a 40G/100G PCI gen-3 host, TCP and UDP performance can be up to 2x slower if you are using cores on the wrong CPU socket. It is important that both the NIC IRQs and the application are using the correct CPU socket.

To specify which core handles the NIC interrupts, you need to disable irqbalance and then bind the interrupts to a specific CPU socket. To do this, run the vendor supplied IRQ script at boot time.

For example:

- systemctl stop irqbalance

- systemctl disable irqbalance

- Mellanox: /usr/sbin/set_irq_affinity_bynode.sh socket ethN

- Chelsio: /sbin/t4_perftune.sh

Where "ethN" is the name of your ethernet device. To find out which socket to pass to set_irq_affinity_bynode.sh, you can use this command:

cat /sys/class/net/ethN/device/numa_node

Or you can just add this to /etc/rc.local:

/usr/sbin/set_irq_affinity_bynode.sh "$(cat /sys/class/net/$ETH/device/numa_node)" $ETH

To determine the best cores to use for that NUMA node, use this command:

numactl --hardware

Note that the larger the 'node distance' reported by 'numactl --hardware', the more important it is to be using the optimal NUMA node.

On Linux, you can use the sched_setaffinity() system call, or the numactl command line tool to bind a process to a core.

The network testing tools iperf3 and nuttcp both let you select the core from the command line. For iperf3, you can use the "-A" flag, and for nuttcp you can use the "-xc" flag to do this.

For other programs you use numactl. For example:

numactl -N socketID program_name

If you are using perfSONAR 4.x and using pScheduler to launch these tools, you don't need to worry about this, as pScheduler will automatically determine which CPU socket to use.

To test the CPU impact of doing IRQ binding, use mpstat. For example:

mpstat -P ALL 1