Firewall Architecture

Introduction

Network firewalls are a common occurrence in converged network architectures, and are designed to protect large portions of the network architecture from the unknown. Experience has shown that this protection can come at a cost to use cases that rely on predictable performance patterns, reducing the throughput of many exercises such as bulk data transfer. Firewall hardware has a daunting task to perform, and the internal architecture of these devices can lead to a bottleneck depending on the traffic patterns that exist.

Architectural Experiment

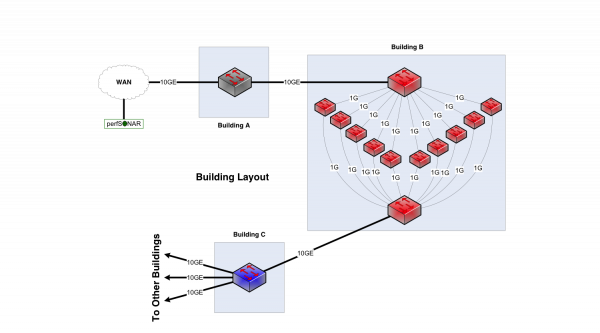

Consider a network between three buildings – A, B, and C. This is supposedly a 10Gbps network end to end, and we have 10Gbps links between them. Building A houses the border router. Lots of work happens in building B – so much so that the processing is done with multiple processors to spread the load in an affordable way, and aggregate the results. Building C houses a distribution switch.

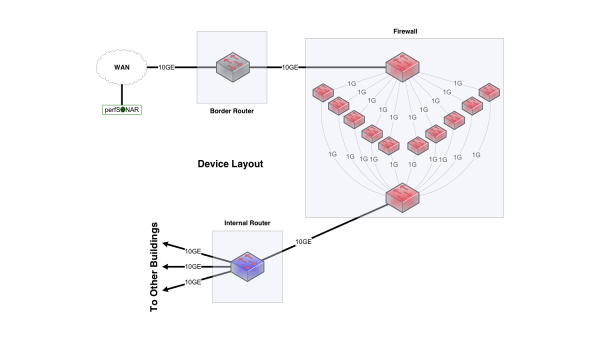

If you look at the inside of Building B, it is obvious from a network engineering perspective that this is not a 10Gbps network. Clearly the maximum per-flow data rate is 1Gbps, not 10Gbps. However, if you convert the buildings into network elements while keeping their internals intact, you get routers and firewalls.

These little 1Gbps devices are firewall processors, and represent a common choice in the architecture of a firewall. This parallel firewall architecture has been in use for years and persists for a number of reasons:

- Slower processors are cheaper

- Typically fine for a commodity traffic load (e.g. IMIX, with smaller capacity shorter lived TCP flows)

The goal of this set of firewall processors is to inspect traffic in parallel. There are mechanisms that allow traffic for a particular connection to go to the same processor as a way to simplify state management and facilitate deeper analysis of headers and data.

Architectural Reality

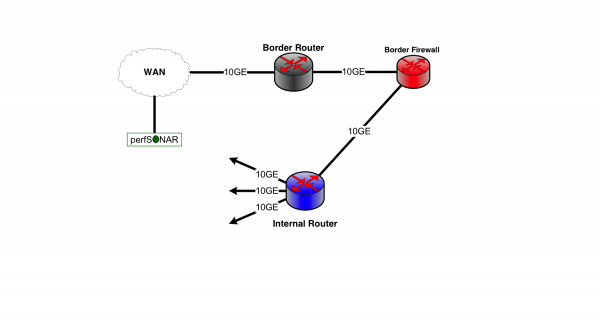

When put into the nomenclature of a typical network, the picture will resemble something similar to what many have deployed today.

Converged traffic may not notice the behavior of a firewall due to the high connection count and low connection data rate. Since each processor is a fractional component of the total capacity, it follows that a TCP connection (single or parallel streams) will incur significant delays in processing, which could cause the connection to stall, enter into the selective acknowledgement phase, or force a retransmission of the entire sending window due to timer expiration. All of these factors have a single symptom for the end user - low throughput. This is a significant limitation for data-intensive science applications.

Firewall Advancements

As with all technology, the trend will lead to a firewall that is capable of handling a 10Gbps flow at nearly line rate, even with background traffic taking buffering and processing resources internal to the device. When this technology is ready, it will be hard to come by and expensive. Science traffic has been capable of producing a single stream TCP flow across long, clean networks for more than 10 years, and it is now routine to see 40Gbps and 100Gbps capable hosts. The firewall will constantly be behind the technology curve, and if there is any hope of using the technology for multiple years it will never match the expectations of the end users with data intensive use cases.

These reasons make the adoption of the Science DMZ security practices vital for the success of science.